AI AND DEMOCRACY EVENT LAUNCHES INSTITUTE FOR ETHICS IN AI

AI AND DEMOCRACY EVENT LAUNCHES INSTITUTE FOR ETHICS IN AI

An expert panel discussion about AI’s interaction with democracy will mark the launch of Oxford University’s Institute for Ethics in AI today (16 February)

Published: 16 February 2021

Share this article

The Institute for Ethics in AI aims to tackle major ethical challenges posed by AI, from facial recognition to voter profiling, brain machine interfaces to weaponised drones, and the ongoing discourse about how AI will impact employment on a global scale. It will be part of the Philosophy Faculty and based in the Stephen A. Schwarzman Centre for the Humanities.

AI is predicted to have significant effects on democracy, and this will be the topic of the launch event. The questions which the panel will discuss include:

- How can AI enhance the quality of public decision making without usurping our rational autonomy?

- Can AI enable more radical forms of democratic political participation?

- Does AI, and the power of big tech corporations that deploy it, pose a threat to the quality of public reason on which a thriving democracy relies?

- How can AI applications further vital public goods - such as protection from the Covid-19 pandemic - whilst respecting individual rights?

The panelists will be: Professor Joshua Cohen, political philosopher at Apple University and Distinguished Senior Fellow in Law, Philosophy and Political Science at Berkeley; Professor Hélène Landemore, Associate Professor of Political Science at Yale and an expert in democratic theory; and Professor Sir Nigel Shadbolt, Chair of the Institute’s Steering Group and Principal of Jesus College, Oxford.

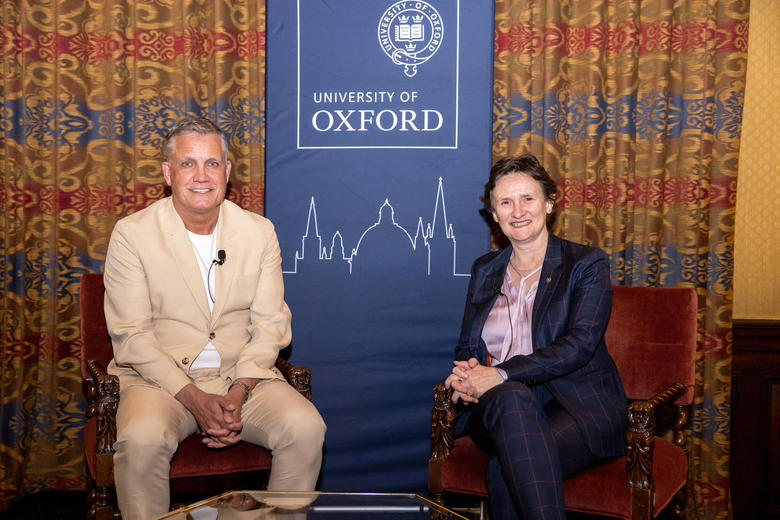

The panel will be introduced by Oxford’s Vice-Chancellor, Professor Louise Richardson, and chaired by the Institute’s inaugural Director, Professor John Tasioulas.

Professor John Tasioulas said: “An essential aspect of our human dignity is the capacity to exercise our rational powers in reaching decisions both as individuals and as societies. The capacity of AI-driven technology to do things that normally require the use of our rational faculties poses serious challenges. One of the most important of these challenges concerns the fate of democracy itself. I am delighted that our launch event will bring together three leading thinkers to address the role of AI in a democratic society.”

Professor Louise Richardson said: “It is wonderful to see the launch of this new Institute which will draw on deep reserves of expertise across the University in humanities, the sciences and the social sciences to address some of the most critical issues of our times.”The Institute was anno

unced in June 2019 as part of a £150m gift to the University from Stephen A. Schwarzman, who is Chairman, CEO and Co-founder of Blackstone, one of the world’s leading investment firms. Mr Schwarzman said: “Artificial intelligence is changing everything and ensuring it develops in a responsible and ethical way is arguably the most important challenge of our time. The Institute for Ethics in AI will put Oxford in a crucial leadership position at the center of the global response to this challenge. The Institute has assembled an outstanding team led by John Tasioulas and I’m certain they will play an important role in the years ahead.”

Today, the Institute is also announcing an outstanding appointment to its academic team. Professor Vincent Conitzer is joining as the Institute’s Head of Technical AI Engagement and Professor of Computer Science and Philosophy. He is currently the Kimberly J. Jenkins Distinguished University Professor of New Technologies and Professor of Computer Science, Professor of Economics, and Professor of Philosophy at Duke University.

He said: “The Ethics in AI Institute is very much what the world needs right now and I am thrilled to play a role in it. I hope to help the Institute engage directly with technical research and development in AI, thereby ensuring and increasing its beneficial impact. The University of Oxford is a perfect place to make such interdisciplinary connections given all the world-leading research conducted there already, but the needs for the Institute's work stretch around the globe.”

He has worked on a variety of topics in AI, and he has recently focused on how we should collectively decide on the objectives that AI systems pursue, as well as on rethinking whether and how we should consider AI systems as agents in the world.

LIVE LINK TODAY: LAUNCH of the Institute for Ethics in AI - YouTube

The Institute’s achievements to date include:

- Set up a regular programme of virtual public-facing events tackling major issues in AI ethics, with many thousands of people taking part.

- Appointed a Director, a Head of Education and Outreach, a Head of Technical AI Engagement, two Associate Professors in Philosophy, two postdoctoral fellows, and two doctoral students.

- Produced research, including Privacy is Power by Dr Carissa Véliz which was named in The Economist’s books of the year for 2020.

- In the coming months, the Institute will:

- Recruit more academics in key areas including AI and society and AI and political philosophy.

- Launch a new website and branding, with a particular focus on bringing AI ethics to new and larger audiences.

- Continue to grow the audiences attending its regular series of events.