RAGE FOR AND AGAINST THE MACHINE

RAGE FOR AND AGAINST THE MACHINE

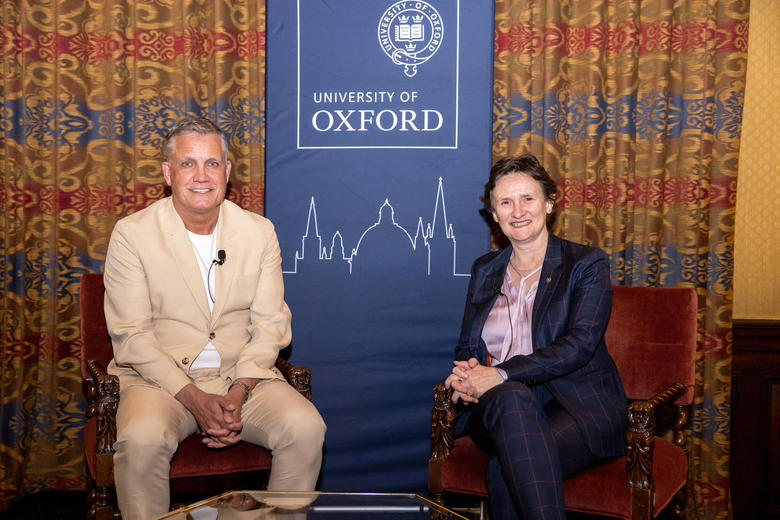

Paula Boddington discusses the infant field of ethics for Artificial Intelligence, with Richard Lofthouse

Published: 15 February 2018

Author: Richard Lofthouse

Share this article

There’s a powerful idea circulating. It says that machines will overtake humans in intelligence later this century. This is the ‘singularity’. It’s a dystopian or utopian notion depending on your viewpoint, which for ethical purposes is a riddle that may have no solution.

This riddle lies at the heart of an intriguing book by Dr Paula Boddington (Corpus, 1980), a senior researcher in Oxford’s Department of Computer Science.

Paula Boddington, 13th February, 2018, in the Weston Library

Towards a Code of Ethics for Artificial Intelligence describes the singularity as a fear or expectation of ‘runaway control’, because an imagined machine pursues one value at the expense of humans – such as a machine manufacturing paperclips at the expense of anything and everything else (a fictional example tabled recently by Oxford AI authority Professor Nick Bostrom).

Google it and the machine spits out this: ‘The technological singularity (also, simply, the singularity) is the hypothesis that the invention of artificial superintelligence will abruptly trigger runaway technological growth, resulting in unfathomable changes to human civilization.’ In the fields of physics and mathematics, the term has different meaning concerning infinity and black holes.

Paula’s book is not about the singularity, but its weight and relevance in construing a context from which to discuss AI ethics is greatly bolstered by recent media hype about machine learning and the likelihood that we all lose our jobs as the machines take over.

Over a cuppa, Paula says, ‘Actually this is an old idea, that a loss of perspective and balance is damaging to human well being, like King Midas turning everything he touches into gold.’

A softer example of this sort of loss of balance is the filter bubble (the title of Eli Pariser’s recent book), which Paula mentions in conjunction with the Tay Chatbot, an experiment in which a chatbot quickly found its way down to a world of hatred and extreme views having been programmed to operate within Twitter. ‘This revealed not the weakness of the chatbot but the weakness of Twitter. It can bring out the worst in human nature by filtering people towards entrenched points of view.’

In journalistic argot, the headline that particular experiment created was, ‘Twitter taught Microsoft’s AI chatbot to be a racist asshole in less than a day.’

With a broad background in behavioural and applied ethics, moral psychology and philosophy, Paula notes that not all the examples are negative by any means.

If autonomous, self-driving cars halve the number of road fatalities by eliminating human error that would presumably be a good outcome, a moral good.

She mentions a totally different example arising from a concurrent but separate piece of research she’s currently undertaking into medical ethnography.

‘I’ve noticed a surprising degree of overlap between medical ethics and the infant field of AI ethics,’ she says. ‘In practice there are situations where we could argue that patient human rights are not really met in hospitals. One right is access to a toilet, yet some patients go in continent and come out incontinent, a well-observed and troubling phenomenon. If a robot or ‘carebot’ helped you to the loo without falling over, that might be less embarrassing for the patient than calling for a nurse who is already under pressure, or reaching for a neatly stacked pile of disposable cardboard urinals.’

If these examples seem random and divorced from each other, that’s part of Boddington’s point in her book, which is broken down into 8 chapters, each with up to 15 sub-sections, much like an undergraduate text book. One of these sub-sections, occupying just two pages, is ambitiously titled, ‘Is There Such A Thing As Moral Progress?’

I suggest that this can’t be answered in two pages, but she explains that her goal is not to provide any answers but to facilitate a conversation that ‘might otherwise be too restrictive, too myopic.’

‘For many, although not all, in the AI community, technology is progress and it can’t be held back. It’s too easy to extend the same unwritten assumption towards human progress or moral progress, in ways that are simply untrue.’

She adds that even defining Artificial Intelligence is problematic.

‘It’s very difficult to define AI. People who put definitions on it are often shot down by others. To me it means any situation where technology intervenes on behalf of humans, taking their place, or extending our reach, in thought, action or decision-making.’

I later realize that such a definition informs the several mentions in Boddington’s book of the Nuremburg Trials of Nazis that followed the end of World War Two. ‘The quintessentially bad excuse of the twentieth century was, “I was only following orders,”’ she writes.

The quintessentially bad excuse of the twenty-first century may yet be, ‘I was only following the dictates of a machine (or algorithm).’

She returns to the example of autonomous driving. In aggregate, fatalities may be reduced and road safety extended, but in particular instances could a driver blame the machine for running someone else over? It starts to sound like a dystopian extension of the Little Britain gag, ‘Computer says no.’

‘We can’t yet say if people are more obedient to machines than to humans, but I think the very question begs for careful discussion…’

Recently at a conference in New Orleans and signed up to loads more in 2018, Paula notes the current boom in this subject. ‘People are really asking these questions of the future, and what it’ll look like,’ she says.

But the readiness of cash-awash tech brands like Google and Facebook to sponsor the conferences might be interpreted as virtue-signaling rather than real engagement, at a time of unparalleled political scrutiny of their activities.

Given a preference for how the future should develop, Paula says that in the area of ethics she much prefers concrete proposals to merely aspirational ones. She contrasts the ‘very vague and aspirational’ charter of values called the Asilomar AI Principles (after the place in California), to the more practical work of the Institute of Electrical and Electronics Engineers (IEEE) which is working on ways of developing ethically aligned design for autonomous and intelligent systems.

‘That’s where the bit of my career currently focused on medical ethnography has had a surprising degree of overlap with AI ethics. There’s the theory of something, or ethics in this case, but then there is the practice.’

Towards a Code of Ethics for Artificial Intelligence (Springer, 2017).

Dr Paula Boddington has a BA in Philosophy and Psychology from Keele, a BPhil and DPhil in Philosophy from Corpus Christi College, Oxford, and an LLM in Legal Aspects of Medical Practice from Cardiff. She has held various lecturing and research posts in philosophy and in ethics, at Bristol, Cardiff, ANU, and Oxford, and is currently working on a more comprehensive outworking of the book she has just published.