WE'RE ALL PLAYING RUSSIAN ROULETTE

WE'RE ALL PLAYING RUSSIAN ROULETTE

The academic field of existential risk is booming - should we worry?

Published: 2 April 2020

Author: Richard Lofthouse

Share this article

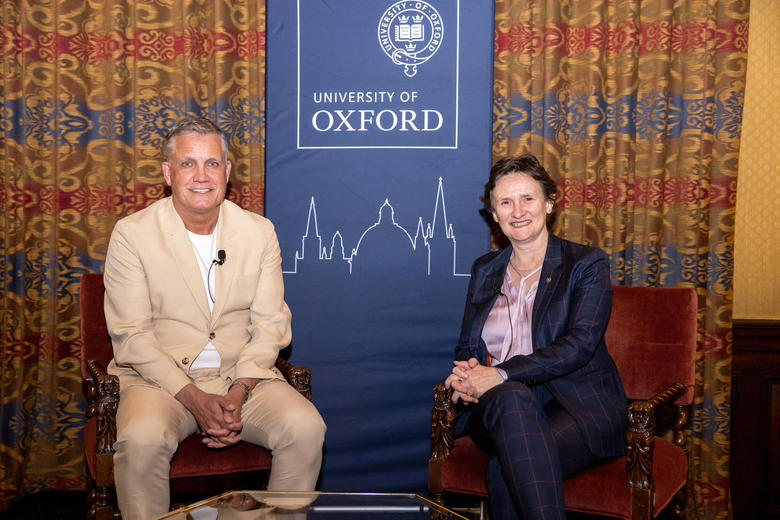

When Dr Toby Ord and I meet and don’t shake hands, the COVID-19 pandemic is unfurling but the café is still open. A week later, it closed.

Could there be a better time to have just published a book on the future of humanity and this thing called ‘existential risk’?

Moral philosopher Dr Toby Ord (Balliol, 2003) has limited time and we don’t exactly discuss it, but the Guardian has already pounced on the section about pandemics, printing verbatim a chunk of Dr Ord’s book The Precipice: Existential Risk and the Future of Humanity.

Numerous artists have had their moment trying to visually depict the end of the world.

In person, Toby can’t be accused of doom and gloom. An Australian by birth, he evinces a sunny disposition.

A Senior Research Fellow at Oxford’s Future of Humanity Institute, he received wide media interest for founding Giving What We Can alongside fellow Oxford philosopher Will MacAskill in November 2009.

The idea is that you donate a significant chunk of your salary to a very effective charity, perhaps 10% but maybe a quarter.

A branch of what is known as ‘effective altruism’, the movement claims over 4,000 active members and cumulative donations over $125m.

At the end of our interview he says that on balance he’s an optimist about the future of humanity.

But the enormous caveat and subject of The Precipice, is simply that we have greatly increased our risk to ourselves through a mixture of expansionist human success including rampant technological and scientific progress – call it altogether power – but without a commensurate rise in wisdom.

The very word ‘progress’ is double-edged. One of the points of the book is simply to note how we routinely discount risks that should be taken more seriously, particularly if we could weigh them dispassionately and do something about them.

‘We have the power to end our story but lack the wisdom to ensure that it doesn’t,’ he says, echoing US astronomer and public scientist Carl Sagan (1934-96), to whom he has been compared.

9 March 2020. Dr Toby Ord, Weston Library, Oxford.

At the very start of the book there is a frontispiece woodcut by Hilary Paynter, showing a sinuous, narrow footpath winding up the side of a vertiginous mountain. Far below in a forested floor, the same footpath recedes far into the distance. If the valley floor represents the slow, flat path of most of human history, it began to kick upwards with the agricultural and scientific revolutions. It kicked up even more steeply with the industrial revolution and has climbed very steeply since. By now, we’re well up the ‘Precipice’ of the book’s title. It’s a long way down and the air has thinned.

Is any of this actually true, I ask?

‘The ‘Precipice’ in my book title is the name I give to the period we have lived in since the invention of nuclear weapons, where humanity is at risk from itself, and where these anthropogenic risks are far higher than the natural risks. We live in a time of greatly heightened risk. I estimate that the chance we don’t get through the next hundred years is about 1 in 6. There is a very appreciable chance that this is the end.’

In his 2003 Our Final Century, Will Civilisation Survive the Twenty-First Century?, Cambridge Cosmologist Martin Rees memorably noted that 1 in 6 are the odds of Russian Roulette, where you fire a pistol at your head for a wager but only one of the six chambers has a bullet in it.

He wrote, ‘…unless you hold your life very cheap indeed, this would be an imprudent – indeed blazingly foolish – gamble to have taken.’

Yet that is what humanity is doing in 2020 argues Dr Ord, quite apart from the COVID-19 pandemic which is more akin to a warning shot than the end-of-times catastrophe that he is preoccupied with.

By natural risks he means asteroid impacts, super volcanoes and stellar explosions, the risk of each carefully considered in Chapter 3. The giveaway clue is that we’re still here after 200,000 years, suggesting that these wipe-out events are not worth losing sleep over.

That information alone could provide a lot of comfort to individuals who have made life changing decisions around one of those risks – certain ‘doomsday preppers’ in the US, for example, the subject of a recent popular TV series of the same title. There are some risks that Hollywood has devoted a lot of time to that are not worth losing sleep over.

All the real risks, the non-natural risks, are self-orchestrated, Toby says. They start with nuclear weapons in 1945 and they haven’t gone away. But the twenty-first century opens up to all manner of proliferating horrible possibilities including an engineered (i.e. deliberate) pandemic that could make COVID-19 look like a polite cup of tea with the Queen.

The most obvious existential risk of nuclear war is completely familiar to any post-1945 generation. We have learned to live with it but it hasn't disappeared despite the end of the Cold War.

What does Toby mean by existential risk as such, and where did the term originate? He attributes it to Oxford colleague Nick Bostrum, who wrote a paper in 2002 titled ‘Existential Risks: Analyzing Human Extinction Scenarios and Related Hazards.’ Bostrum is Professor in the Faculty of Philosophy at Oxford and founding Director of the Future of Humanity Institute.

Existential risk means exactly what it says, argues Dr Ord.

‘Either the entire population is killed or there is an unrecoverable collapse of civilisation; it [such an event] wouldn’t just destroy our present but our entire future as a species. That’s what makes an existential threat a unique kind of threat, and worth thinking about even in cases where the probabilities of it happening are very small.’

Has the risk gone up further in the opening two decades of our twenty-first century? This one is harder to answer. Rees hoisted a beautifully written red flag in his 2003 book when he estimated a civilizational collapse as 1 in 2, worse odds than Ord’s 1 in 6.

But Ord says that Rees meant any kind of collapse of civilisation. ‘When he says collapse he means things might pick up, whereas I’m talking about an event from which we cannot recover. We’re estimating different things.’

The idea of a Doomsday Clock is not new, but has received extra attention in recent years.

Ord continues, ‘From speaking to him [Rees] I think that he would put the chances of extinction at lower than 1 in 6.’ In other words Ord says the risk is greater now than it was then; it isn’t coincidence that he has trained his sights on ‘worst case scenarios’ which, he notes gravely, we routinely ignore.

Is this in fact a new academic field of inquiry? Yes; but the subject of the fate of humanity is not new as a speculative concern among thinking individuals, quite apart from thousands of years of eschatological millennialism that foresaw in different cultures various ‘ends of worlds’.

Ord says that the first non-religious modern awareness of the sweep of humanity and its possible ending sprang up in the wake of Darwin’s Origin of Species. Then you have early twentieth century figures like H.G. Wells who expressed the concept; and then you have the atomic bomb but you also get to Rachel Carsen’s Silent Spring (1962), where suddenly the environment is perceived to be vulnerable to collapse rather than inexhaustible.

The early 80s then saw a clutch of exceptional thinkers wrestling with the issue – Jonathan Schell, Carl Sagan and Oxford moral philosopher Derek Parfit, the latter one of Ord’s supervisors for his doctorate and at the time of his death in 2017 an Emeritus Senior Research Fellow at All Souls College.

‘We’re constantly discovering new risks that we didn’t know about a couple of decades earlier, and there could be more to come’, Ord says disconcertingly.

The good news is that while climate change is terrifying and irreversible, he doesn’t rate it very highly as an existential threat, at 1 in 1000 for the next century. It could wreck business as normal and it could kill or displace millions, but it might not lead to total collapse.

That’s the good news.

The bad news is that he places much worse odds against the possibility of an engineered, deliberate pandemic (1 in 30) and still worse against an unaligned artificial intelligence subverting its human masters, (1 in 10).

Recognising that many people won’t recognise either, he goes to special lengths to explain both in a chapter titled ‘Future Risks’. If you were very short of time you might head there.

He notes that humanity has survived more than one bubonic plague, yet the Black Death killed as many as half the European population over six years. The trouble with 2020 is that global population is a thousand times larger and we fly everywhere and live crammed together in cities. Guess what.

Countering the doom is modern medicine and cross-border collaboration and all the good sides of modernity. 200 years ago we didn’t even know what a virus was. Now we can look forward to a COVID-19 vaccine. Where’s the real risk?

He explains in terrifying detail that there is real risk of accidental leakage from ‘secure’ facilities where different governments play around with pathogens for good and ill and don’t tell their citizens about it. Many such leakages have happened but been hushed up – a detailed table lists some of them, such as the UK top level security facility that leaked foot and mouth disease into ground water from a bad pipe, was closed, inspected and reopened, and then promptly did it again just two weeks later.

But then we move onto the really terrifying stuff where for example the genome of smallpox is freely available on the web, and where there is a direct conflict between competing scientists in a free information exchange environment (the ‘unilateralist's curse’), and malevolent actors who could do goodness knows what with the information. And then throw in unknown bioweapon programmes (fifteen countries and counting) and a treaty to manage them not worth the paper it’s written on.

On the subject of ‘unaligned artificial intelligence’ Ord goes to great lengths to explain what he means and why it matters. Some readers might insist still that it’s not the big deal he makes out, and there has been a lot of misinformation in popular media. But he sketches the vertiginous rise of AI in recent years after a slow start in 1956, and the fact that in 2017 the company DeepMind developed a computer that self-taught itself from scratch to Grand Master-beating chess ability in four hours.

The danger is that within the next decade or two this level of ability could lead to what’s called Artificial General Intelligence, where some aspect of machine learning gets so clever that it acts to prevent being shut down by its human masters, perhaps planting millions of replica codes in other computers, a sort of grand malaise written in code that could compromise humanity’s future to such a degree that we could not overcome it.

It would not be an evil zombie or robot army coming over the horizon like a Hollywood finale. It would be a poisoning of the internet so that nothing worked and civilization fell to bits, or some version of that.

The other point Ord is keen to make is just how young we are as a species. The fossil record for mammals and other hominoids suggests we have millions of years in our future, against which the 200,000 years of our past make us the evolutionary equivalent of a sixteen-year old adolescent.

‘A bit like an adolescent, we’re risking the whole of our future for the next ten minutes,’ he says.

Silly fad or legitimate concern? So called 'prepping' has now engaged an estimated 3 million Americans, who consider how they would survive an existential risk, building up food reserves and other defences. The broad basis of their concern is not to be laughed at says Dr Ord.

Whether this is avoidable, or whether humanity could ‘grow in wisdom’, are very moot points of course, but Toby notes how the ‘moral compass’ has risen in recent centuries, and how in so many obvious ways we have genuinely progressed to better life expectancies, better medical understanding, reduced poverty and so forth.

What he wants now is for the powers that be to get real about the new risks and start to discuss and regulate them much more carefully than in the past. Once he is past sketching the risks, the book moves on to a section about how we have it within our power to climb down off the Precipice.

Not everyone will agree that humans can disinvent themselves or reverse things like technology. But to cite just one example, Toby notes that the international body responsible for the continued (if ineffective) prohibition of bioweapons (the Biological Weapons Convention) has an annual budget of just $1.4 million, ‘less than the average McDonalds Restaurant.’ We could try harder.

He holds out the ‘possibility…that we end this period of heightened risk by getting our act together and rising to these challenges, becoming the kind of society that ends these risks and ensures that they don’t recur.’

Asked whether it can really be true that we’re in a specially heightened era of risk, given that almost every generation of the past has worried about the end of times, typically eschatologically, Dr Ord replies,

‘I hope very much that I’m falling victim to some sort of standard human bias here, but there is a substantial weight of evidence to the contrary and very few critiques that deal with it. Yes, there are biases we fall into, but when the evidence mounts up as much as it does, we might want to start dealing with that too.’

The natural pandemic of 2020 falls readily into place within Dr Ord’s broader study of risk, but we didn’t want to make it the only subject of discussion. ‘Regulating live animal markets and animal welfare would be an obvious outcome of COVID-19, if this is indeed where the virus jumped across from animals to humans. It’s just one example of the many different threats to our wellbeing that I am interested in. The risk of this happening was well understood but the broader appreciation of the risk, leading to changes in human activity commensurate with the risk, were ignored, as was the virus itself in almost every country where it has taken root.’

The Precipice Existential Risk and the Future of Humanity Bloomsbury 2020, £25.00.

Dr Toby Ord did his BPhil at Balliol in Philosophy following a first degree in Computer Science and Philosophy at the University of Melbourne. In 2006 he commenced his DPhil at Christ Church where he was a Senior Scholar. He is now a Fellow at Balliol and Senior Research Fellow at the Future of Humanity Institute. He is the founder of Giving What We Can and co-founder of the Effective Altruism movement.