CLAMPING DOWN ON FAKE NEWS

CLAMPING DOWN ON FAKE NEWS

A packed Sheldonian Theatre was backdrop to a lively discussion around fake news and public misinformation

Published: 3 February 2020

Author: Richard Lofthouse

Share this article

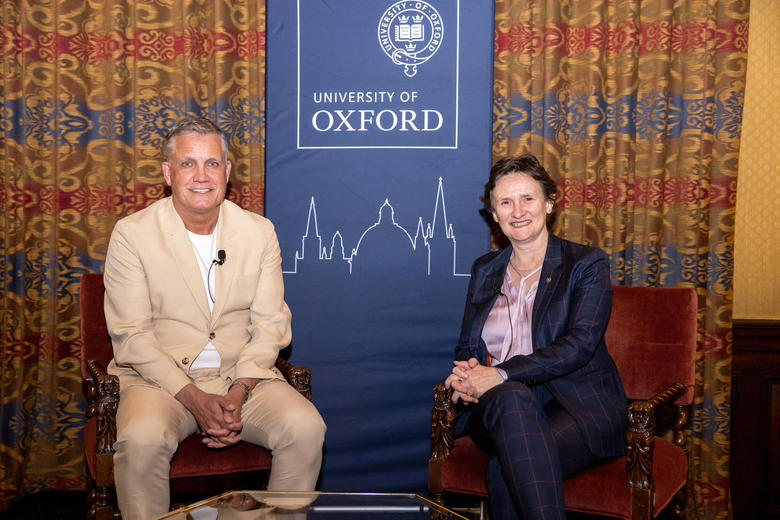

Titled ‘An Oxford Conversation about the impact of fake news on our lives’, the panel consisted of Sir Simon Stevens (Chief Executive of NHS England) and Damian Collins MP (former Chair of the House of Commons Digital, Culture, Media and Sport Select Committee), the discussion led by Sarah Montague (Presenter of BBC Radio 4’s World at One).

Introducing the evening, Professor Sir Rory Collins, Head of Oxford University’s Nuffield Department of Population Health, explained that the reason the Department was putting on an event about what is typically regarded as a ‘media’ question was because he had become deeply concerned about the effect of powerful online disinformation on public health.

‘Fake news affects the health of people,’ he said, citing two central recent UK examples, one being ‘anti-vaxers’ (anti-vaccine proponents) whose dubious advice to not vaccinate babies against measles has led to a resurgence of the disease in the UK and elsewhere.

Another case involves misinformation around the side-effects of taking statins. Persuading people not to take them has probably led to some of them having heart attacks and strokes.

The report by the House of Commons Digital, Culture, Media and Sport Select Committee on Disinformation and Fake News, Rory Collins described as ‘a cracker’ and well worth a read.

The report paints a bleak picture of the ways that Facebook and other social media platforms are involved in the spread of disinformation in their pursuit of profits.

Damian Collins argued that ‘freedom of speech is not the same thing as freedom of reach.’ He argued for a legal requirement to reveal authorship of online posting, but also a more powerful digital mechanism to stem the otherwise wildfire-like spread of sometimes wildly false information.

The MP argued for the creation of a Regulatory Body to hold social media platforms to account, the equivalent bodies already in existence in France and Germany, he noted.

Later in the evening a show of hands found a large majority in favour of such a body, although of the handful of individuals who were against, legitimate points were raised.

What is evidently false at a given point in time might later be overturned in legitimate ways, especially where science is concerned.

The panel contended that what they principally meant was demonstrable falsehood that currently whirls around the internet unchecked.

So-called ‘deep fake’ films were cited. This is where, for example, a public figure like former US president Barrack Obama has been manipulated in a video to appear to say with force of conviction and free volition, words that were originally said by President Bush. Such pure falsehoods should be removed without hesitation, argued the panel.

A regulatory body would not be a censor, but would set a legal framework compelling the platforms to act or face penalties.

Broadly, both speakers were insistent that the simplest regulatory mechanisms that govern other walks of life from medicine to banking remain almost entirely absent from social media. Almost inevitably, they contend, this has to end.

Otherwise, they argued, an entire legal duty of care is basically delegated to for-profit corporations like Facebook.

During the conversation Sarah Montague asked Damian Collins what was the worst he had seen compiling his report. He replied that it was evidence that the persecution and ethnic slaughter of the Rohinga people of Myanmar was organised with impunity on Facebook, and that Facebook refused to do anything about it.

Sir Simon noted that if you search ‘anti-vaccine’ on Amazon, you currently get a top ten books that are all basically untrue or at the very best highly dubious, sometimes self-published. There has been a boom in on-line publishing around anti-vaxer themes despite the fact that one of the original sources of the idea, Andrew Wakefield, was struck off the medical register.

The point was made along the way that the very term ‘fake news’ is of course a shorthand with various meanings attached.

When US President Donald Trump helped to popularise it, he typically meant anything in the media he disagreed with. But for the purpose of the Oxford debate it referred to proven falsehoods masquerading as truth or pitched at unsuspecting audiences in calculated ways so as to deliberately sow seeds of doubt.

In the latter scenario, a personal opinion of a real person that might be superficially persuasive but demonstrably wrong, can be lofted up into the Twittersphere and given a whole new life by malevolent actors such as state-backed agents paid to meddle, plus automated ‘bots’ that search key words and then bombard social media platforms with re-tweets and other forms of perverse dissemination.

Damian Collins described the Brexit referendum as ‘the petri dish of the US election’ that elected Trump, and the broader theme of democracy at risk was discussed in respect of free speech and credibility, one of the ultimate questions being how publics are protected from misinformation by serving politicians.

Biographies of the panel:

Damian Collins (St Benet's Hall, 1993) has served as the Conservative MP for Folkestone and Hythe since 2010. In October 2016, he was elected by the House of Commons as Chair of the Digital, Culture, Media and Sport (DCMS) Select Committee. In this role, he led the Committee’s inquiries into doping in sport, fake news, football governance, homophobia in sport, and the impact of Brexit on the creative industries and tourism.

The DCMS Select Committee held an extensive and high profile inquiry into “Disinformation and Fake News” between September 2017 and February 2019. It covered individuals’ rights over their privacy, the effect of online content on people’s choices, and digital interference in elections. It resulted in the publication of two landmark reports, and a sub-committee is working to ensure that new legislation and policies protect individuals from disinformation.

Sarah Montague is a journalist and presenter who has worked on a variety of BBC programmes including Today, Breakfast with Frost, Hardtalk, and Newsnight. She is currently a presenter of The World at One, and is known for her insight, depth of knowledge, and her ability to convey her thoughts accurately whether presenting on the radio, on television or in person.

Sarah spent her early career as a stockbroker for Natwest Capital Markets, before joining Channel Television in 1991. She went on to work for Reuters in 1995 and became a Sky News business correspondent in 1996, before joining the BBC in 1997. In addition to her work for the BBC, she has worked with leading businesses and organisations in a wide variety of sectors as a conference moderator, awards host and speaker.

Sir Simon Stevens (Balliol, 1984) is Chief Executive Officer (CEO) of NHS England. He is also accountable to Parliament for over £120 billion of annual Health Service funding. Simon joined the NHS through its Graduate Training Scheme in 1988. As a frontline NHS manager, he subsequently led acute hospitals, mental health and community services, primary care and health commissioning in the North East of England, London and the South Coast. He also served as the Prime Minister’s Health Adviser and policy adviser to successive Health Secretaries at the Department of Health.

Simon spent a decade working internationally in the USA, South America and Africa. He has been a trustee of the King’s Fund and the Nuffield Trust, and a visiting professor at the London School of Economics and Political Science.

In last year’s Oxford Conversation, the investigative journalist Nick Davies (who broke the WikiLeaks and phone hacking stories) and the previous Guardian Editor Alan Rusbridger discussed how changes in the ways that news is distributed have led to an explosion of uncontrolled sources of “information” on the internet and in social media. A video of that event